The History of Technology is Written by the People Who Survived It.

We keep saying technology always creates new jobs. It does. The question is whether the same people who lost the old ones ever get them.

Every generation gets handed a story about technology and jobs, and the story always ends the same way: we adapted, new roles appeared, and things worked out. This is technically true. It’s also the kind of truth that papers over a lot of suffering.

The steam engine came. Factory work replaced agricultural work. Electricity arrived and reorganized entire industries overnight. Computers showed up in the 1980s and everybody panicked, then the economy somehow absorbed them.

The internet killed travel agents and video rental clerks and classified ad salespeople, but it created software engineers and logistics specialists and about forty job categories that didn’t exist in 1995. The pattern is real. It’s even reassuring, if you look at it from the right angle, at the right distance.

But ask yourself who gets to tell that story. Not the handloom weavers in early 19th-century England whose incomes collapsed over two decades while factories gradually came online. Not the generation of workers who reached middle age in exactly the wrong moment, too old to retrain and too young to retire. Not the mill towns that hollowed out in the 1970s and 1980s when manufacturing automation and offshoring hit simultaneously, and which in some cases still haven’t recovered.

History, written from a distance, looks like adaptation. Lived from inside, it sometimes looks like getting left behind while someone else benefits.

I’ve been following the AI and employment debates for a few years now, reading the research, paying attention to what’s actually happening in different industries, and I find myself in a genuinely uncomfortable place. I think the historical reassurance is real and I think it might not be enough. Those two things can coexist.

The Luddites get invoked a lot in these debates, usually as a cautionary tale about people who were wrong. What gets left out is that the Luddites weren’t wrong about what was happening to them.

They were skilled textile workers whose specific expertise was being devalued faster than they could adapt. Their assessment of their immediate situation was accurate. They just couldn’t see the industrial economy that would eventually absorb their descendants into different kinds of work.

The fact that things worked out over a century doesn’t mean their experience wasn’t real.

The pattern that’s supposed to reassure you

Technology has always displaced jobs; new jobs always appear, and humans always find a way. The people telling you to panic about AI are the same type of people who worried about the printing press or the tractor.

Or, for that matter, the people in the 1960s who were convinced that automation was going to produce mass unemployment within a decade. President Johnson actually convened a commission on it in 1964. The commission concluded that automation would not cause permanent unemployment. They were right, in the aggregate.

There’s something to this. In 1900, roughly 40 percent of American workers were in agriculture. Now it’s under 2 percent. That’s an enormous displacement, and yet there was no permanent unemployment catastrophe.

The economy absorbed those workers over generations, first into manufacturing and then into services. If you look at the broad employment numbers from 1900 to today, the story is one of continued high employment through repeated technological disruption.

David H. Autor at MIT has done some of the most careful work on this. His research tracks how automation tends to hit what he calls “middle-skill, routine” jobs first: bookkeepers, assembly line workers, data entry clerks. Jobs that follow a predictable set of steps.

Meanwhile, low-skill manual work (cleaning, construction, elderly care) and high-skill cognitive work (management, creative work, medicine) were harder to automate. His work through the early 2010s mostly supported the “adaptation” story, though he’s been more cautious since, particularly in papers he’s written about the 2016-2020 period.

The reassuring version of history is accurate about outcomes over long periods. What it’s less honest about is the distribution of those outcomes across time and across people.

I should say: I find myself persuaded by the historical pattern, most of the time. Then I spend a day reading about what’s happening in customer support departments right now, and the persuasion wobbles a bit.

A company I know of reduced its customer service headcount by about 60 percent over 18 months after deploying AI tools (chatbots). The overall economy might eventually absorb those workers. The individual people had a very rough few months, some of them even more than a year.

What steam and electricity didn’t touch

What truly sets AI apart, or at least what feels different, is something I often reflect on, though I’ll admit my confidence fluctuates depending on which week you ask me.

Previous technologies replaced physical labor first. The steam engine did what human muscles did, but faster and cheaper. Electricity automated what human hands did, but at scale. Industrial robots in the 1970s and 1980s replaced workers who moved and assembled things.

Even early computers replaced clerical work, which was physically performed by people sitting at desks processing paper.

Cognitive labor was largely untouched. The person who analyzed the data the computer processed still had a job. The person who wrote the legal brief based on the research the paralegal compiled still had a job.

The person who designed the product the factory produced still had a job. If your work involved thinking more than doing, previous waves of automation generally left you alone.

AI doesn’t respect that division. A current large language model can draft legal briefs, analyze medical scans, generate code, produce marketing copy, design certain categories of graphics, and do passable financial modeling. Not perfectly. Not without human oversight.

But well enough to change what “human oversight” means in practice. Fewer people supervising more AI output, rather than people doing the work themselves.

There’s also something different about the speed of improvement. The steam engine took decades to develop from a novelty into something that genuinely restructured an economy. The systems doing cognitive work today are meaningfully more capable than the systems from three years ago, in ways that feel faster than the typical technology adoption curve.

I’m not sure how much weight to put on this, because the history of technology is full of moments where people thought change was accelerating and then it plateaued. But I don’t want to dismiss it either.

A friend of mine who runs a small design agency told me in 2022 that AI image tools were a toy. In 2023 he said they were useful for mood boards. In early 2025 he laid off one of his three junior designers. He didn’t say it was because of AI. But I noticed, and he later admitted it was because of AI.

The relevant question isn’t whether AI can replace a lawyer. It’s whether AI can replace three-quarters of the billable hours a junior lawyer at a mid-sized firm currently performs. Those are different questions with different answers.

And the same version of that question applies to radiologists, financial analysts, junior software engineers, and a fairly long list of roles that, until very recently, seemed safely in the “cognitive work” category that automation doesn’t touch.

The gap nobody talks about

In 1878, telephone operators were mostly men. Within a decade, the industry had switched almost entirely to women, partly because telephone companies decided women had better temperaments for the work and partly because they could pay women less.

The men who’d held those jobs went somewhere else. The women who replaced them held those jobs for several decades, until automated switching systems began replacing them in the 1920s and 1930s.

I’ve been thinking about that story lately, though I can’t quite make it prove what I want it to prove. The operators did lose their jobs eventually. But the industry grew so fast that even as individual exchanges automated, total employment in telecommunications kept rising for decades. The operators who got displaced in one place often found work at the companies building the new equipment or managing the new systems.

I was having lunch with a friend who does HR consulting last spring, somewhere around the time the bill came and we started arguing about who got it, and she said something that stuck with me. She said the companies she works with aren’t thinking about whether to use AI. They’re thinking about how many fewer people they need to hire this year. Not layoffs, mostly. Just smaller teams. Slower backfills. The kind of headcount reduction that doesn’t make headlines.

Does the telephone operator story mean retraining works? Partly. Does it mean the workers who got displaced in 1930 were fine? Not necessarily.

What actually worries me about the current moment isn’t whether new jobs will exist. They probably will. It’s the speed. The transition from handloom weaving to factory work took roughly fifty years. The transition from human telephone operators to automated switching took maybe thirty.

These are long enough timelines for a workforce to gradually shift. The displaced workers age out, the younger generation enters a different labor market, the pain gets distributed across time rather than concentrated on a specific cohort.

If AI capabilities keep compressing that timeline, the question isn’t whether the economy adapts. It’s whether the economy adapts faster than individual working lives can absorb. A 52-year-old paralegal whose skills become less valuable over three years doesn’t have fifty years to wait for the new equilibrium.

Neither do the thousands of entry-level programming jobs that used to serve as the training ground for senior engineers. If junior roles shrink because AI can handle that work cheaply, where do the senior engineers of 2035 come from? That’s a question I don’t see asked often enough.

There’s an entire argument here about what governments and companies should do about retraining. I don’t find myself particularly hopeful about it, based on how previous government retraining programs have gone, but I also don’t have good data on what actually works at scale, and the people who study this disagree sharply.

I don’t see obvious reasons why an AI retraining program would work dramatically better, though I’d genuinely like to be wrong about this.

Same fear, different century

In 1589, William Lee invented a knitting machine and brought it to Queen Elizabeth I hoping for a patent. She refused, reportedly saying she feared the machine would put her subjects out of work. Lee eventually took his invention to France.

People have worried about this for a very long time. And for most of that time, they were wrong, at least in the aggregate.

There’s a psychological mechanism at work here that’s worth naming, though I don’t know the exact study. Humans are good at imagining what gets destroyed and bad at imagining what gets created.

When the internet was arriving in the mid-1990s, it was easy to see that travel agents would lose business. Nobody imagined the app economy, or that there would someday be a profession called “influencer” or roles that would employ millions of people globally.

New job categories are, almost by definition, unimaginable before they exist. If I’d asked someone in 1990 to name the skills they’d need to become a successful “social media manager,” they would have had no idea what I was talking about.

This cuts both ways, though. If we’re bad at imagining the new jobs that AI will create, we might also be bad at imagining just how many existing jobs it erodes. The imagination failure works in both directions.

A 2013 paper by Carl Benedikt Frey and Michael Osborne at Oxford estimated that 47 percent of US jobs were at high risk of automation over the next decade or two. That figure got repeated everywhere. It was also somewhat contested, and the follow-up research was messier.

A 2016 OECD study applying similar methods to European countries got numbers closer to 9 percent. The spread between 9 percent and 47 percent tells you how uncertain the methods are, not how confident we should be in either number. Both studies used similar approaches to answering the same question and got results that differ by a factor of five.

The prior history of panics-that-turned-out-fine is real evidence. It just isn’t conclusive evidence. And I notice that the people invoking history most confidently tend to be people who are well-positioned in any scenario: academics with tenure, senior knowledge workers, people whose specific skills are among the last things AI will touch.

That doesn’t make them wrong. But it’s worth noticing who’s most comfortable with the historical argument.

Which humans, exactly

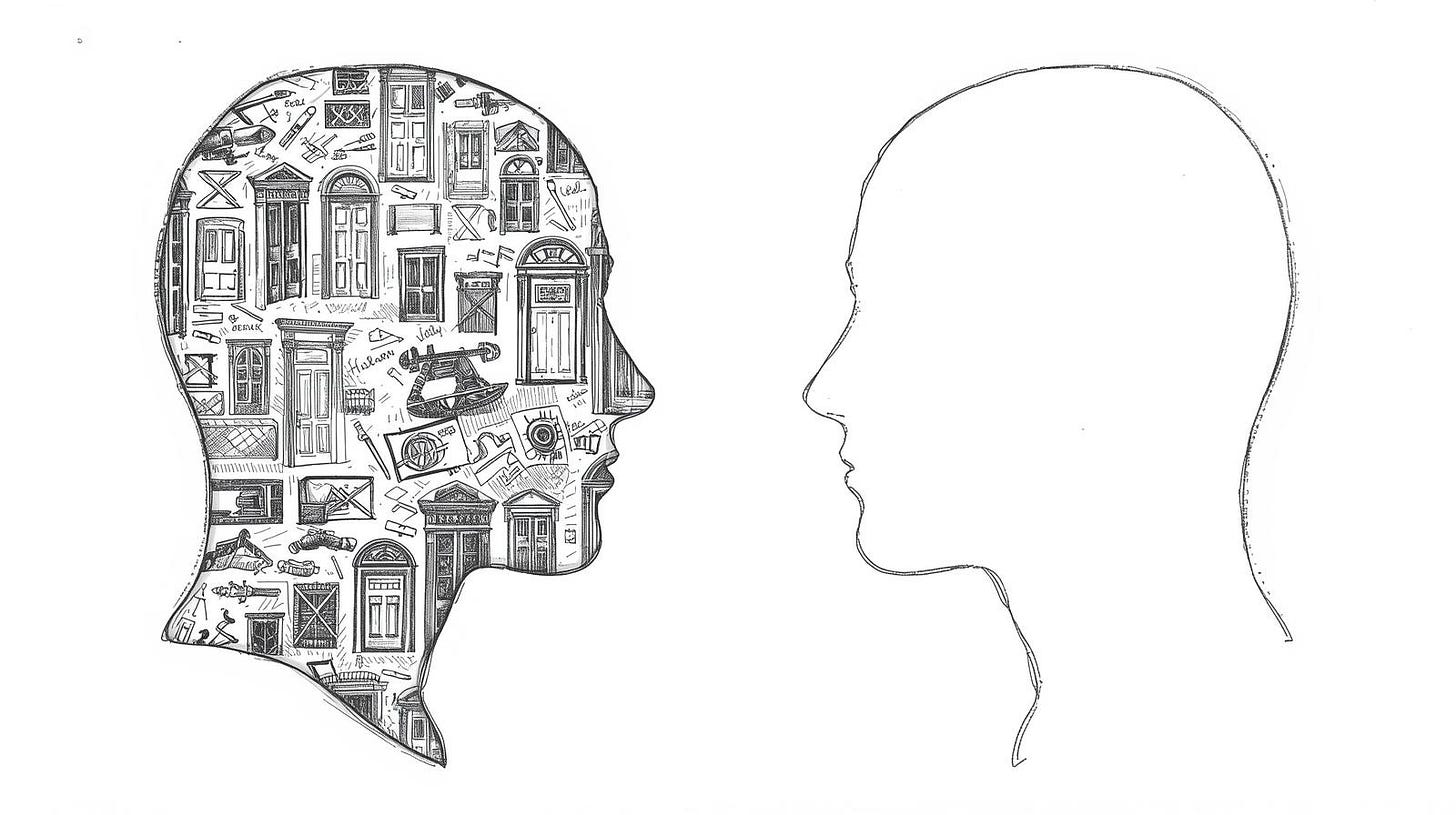

I keep coming back to a distinction that doesn’t get made clearly enough in these arguments.

When people say “technology creates new jobs,” they usually mean: new jobs exist in the economy after the technology arrives. They don’t necessarily mean the same humans who lost their old jobs get the new ones.

Sometimes that happens. Often it doesn’t, or not completely, or not for the people who are in the hardest circumstances: older workers, workers in specific geographies where the old industry was concentrated, workers without the educational background to make the transition.

The industrial revolution created enormous wealth and eventually raised living standards dramatically. It also produced, in the short and medium term, some genuinely miserable conditions for a lot of working people. Economic historians debate how long that adjustment period was.

The range of estimates is sobering. Some put it at 30 years before living standards for average workers began clearly improving, some put it longer. A generation that got ground up in the first decades of industrialization didn’t live to benefit from the prosperity it helped create.

What I can’t tell you, and what nobody can tell you honestly, is which category the current AI transition falls into. Is this another electricity moment, where the displacement is real, but the new opportunities are large enough and fast enough to absorb most workers?

Or is something genuinely different happening, where the cognitive nature of what’s being automated changes the math in ways the historical pattern doesn’t capture?

I’ve spent time reading people who do labor economics professionally, and the ones I find most credible tend to express genuine uncertainty rather than confident predictions in either direction. The confident predictions I’ve seen have an unfortunate tendency to be wrong within a few years, in both directions.

The people who were certain in 2012 that AI was nowhere near replacing professional knowledge work look bad now. The people who were certain in 2020 that GPT-3 was about to eliminate most white-collar jobs also don’t look great.

There’s a version of this story where the next twenty years look like a slightly rougher version of the last forty: some workers lose, more workers gain, the overall employment numbers stay relatively healthy, and we debate afterward about distribution.

There’s another version where cognitive automation hits fast enough, broadly enough, that the labor market can’t absorb it cleanly. There might be a third version we can’t see yet, involving job categories that don’t exist and won’t for another decade.

Both of those first two versions are consistent with the evidence we have right now. The honest answer is that we’re running an experiment without a control group, and the results won’t be clear for another decade at least.

What’s particularly strange about this moment is that the people building the technology often acknowledge they don’t know what the labor market effects will be, while simultaneously continuing to build as fast as possible. I don’t have a strong view on whether that’s irresponsible or just the nature of how technologies get developed, but it’s worth noting.

The question isn’t whether humanity survives this. It probably does. The question is which humans get to surf the transition and which ones get caught underneath it, and right now I don’t think anyone has a confident answer to that, including the people who sound most confident.

The people being most definitive in both directions, the “this is fine” camp and the “civilization-ending disruption” camp, seem to be working from the same incomplete evidence and reaching opposite conclusions because of their priors, not their data.

Though I’ll say this: the historical record suggests we’re better at helping the people who lose when we actually think hard about them in advance, rather than assuming the market will work it out on its own in due course.

Whether we’re actually doing that this time is a different question, and the conversations I hear and policy circles suggest we’re mostly still in the assumption phase, hoping the historical pattern holds and the adaptation happens on its own schedule without much active intervention.

Right now, I feel like a textile worker watching the first steam-powered looms arrive on the factory floor. Is this actually it? Or am I just too close to these AI tools and people using them heavily every day as power users, which makes me overestimate what they can do and catastrophize their impact on the broader job market?

I’ll have my answer soon enough. I hope I’m wrong.

In Case You Missed It: