You're not losing your job. You're losing the reason it existed.

AI isn't taking your job. It's revealing that your job never required human judgment. The shift is from doing work to owning decisions.

There’s a version of this conversation everyone’s been having. It goes: AI will take jobs. Or it won’t. Or it’ll create new ones. Or we’ll be fine. Or we won’t.

I’m genuinely tired of the jobs debate. Not because it’s unimportant. Because it’s the wrong argument.

The thing AI is actually changing is more uncomfortable than job loss. It’s this: for a lot of people, the thing they’ve been paid to do, the core activity they call their job, doesn’t require human judgment. Never did. AI is just making that visible.

That’s the shift. Not headcount. Not automation. Not even productivity.

The shift is from doing work to owning decisions. And those are not the same thing at all.

The comfortable middle is already gone

Before getting into what changes, it helps to understand what’s already happened. Because the “AI is coming” framing is weirdly premature. The middle of the labor market has been hollowing out for two decades.

Economists David Autor, Lawrence Katz, and Alan Krueger documented the early version of this pattern in the late 1990s, showing how computerization started eliminating routine cognitive work while high-skill and low-skill roles stayed relatively intact.

David Autor extended that work significantly, and by the early 2010s the evidence for what he called “job polarization” was fairly well established: the roles most at risk weren’t the lowest-paid but the mid-wage, routine-heavy ones. Data entry clerks. Bookkeepers. Quality control auditors whose entire job could be described in ten rules.

That pattern didn’t stop. It accelerated.

What AI does is push the boundary upward. The “routine” category now includes things that used to sound complicated: drafting legal summaries, writing first-pass code, analyzing competitive positioning, building financial models, responding to customer escalations based on policy guidelines. These tasks look professional. They’re cognitively demanding in a surface sense. They require training, familiarity with jargon, sometimes years of practice to do quickly.

But they share something with the bookkeeper’s job: given enough examples and a clear success criteria, they can be done without anyone actually making a call.

That’s the tell. Not whether the task is hard. Whether it requires a call.

A junior analyst who spends sixty percent of their week pulling data, formatting it, and summarizing it for a director to interpret is not making calls. The director is making calls. The analyst is producing inputs. AI produces inputs faster, cheaper, and without burnout.

That’s not the uncomfortable part, though. It’s not that the analyst might lose their job. It’s that the analyst’s job was always the director’s job, outsourced downward for cost and convenience, and AI just made that obvious.

MIT economist David Autor noted in a 2022 NBER working paper that middle-skill job displacement has been ongoing since roughly the 1980s, and that what distinguishes the current wave is the speed of capability expansion into tasks previously considered non-automatable. His concern wasn’t apocalypse. It was the compression of the timeline for adaptation.

That compression is what makes this moment different. Not the destination. The speed. And the fact that this time, the capability expansion is hitting roles that have traditionally felt quite safe: analyst, associate, coordinator, specialist. The cushion between “I do knowledge work” and “my role is economically exposed” is thinner than it has ever been before.

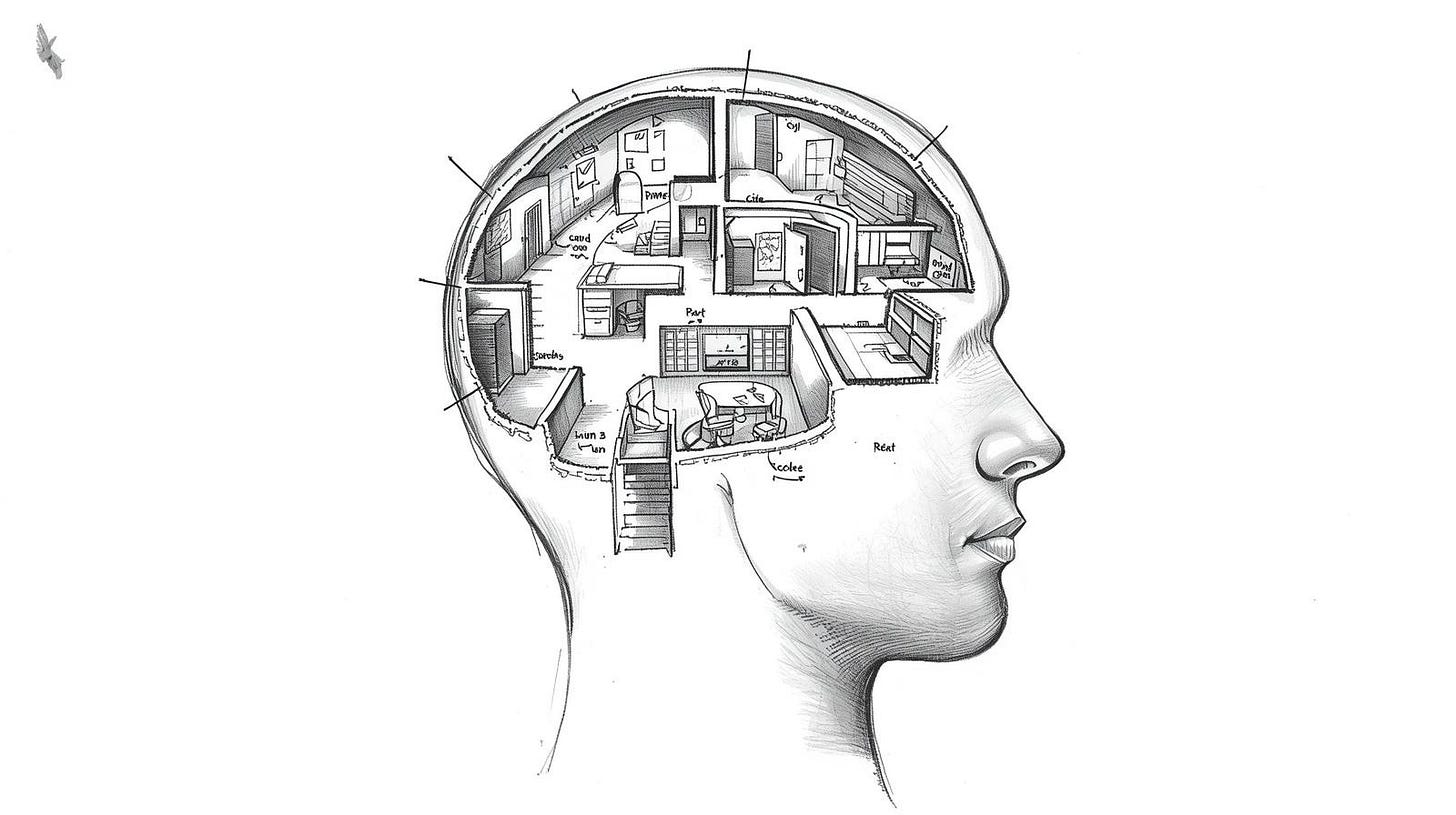

What “knowledge work” actually meant

This is happening in nearly every org I know. A team gets built around specialization. You need someone who knows the analytics platform. You need someone who knows the legal compliance requirements. You need someone who knows the presentation layer of the client relationship. And you need someone who can write.

Each of those people becomes the organization’s memory for their slice. The system works. It’s inefficient, but it works. The knowledge lives in people, so the people have to stay.

There’s a mild irony in how much of what we called “knowledge work” was really “organizational memory work.” The knowledge wasn’t insight. It wasn’t judgment. It was familiarity. Knowing where things lived. Knowing which form to use. Knowing that Lucy in legal always takes three days to turn around a contract so you have to plan for that. Knowing the PowerPoint template is actually version 4.2 from February, not the one on the shared drive.

This is not a small amount of what knowledge workers do. In my experience it’s a large amount. Maybe most of it for mid-level roles.

Management consulting, interestingly, built an entire industry around this problem. When consultants come in and spend two weeks just “understanding the org,” what they’re doing is acquiring organizational memory fast enough to be useful. The firms that got very good at this, McKinsey, Bain, BCG, developed structured approaches: process maps, knowledge repositories, interview frameworks. They were not solving intellectual problems. They were solving organizational amnesia problems efficiently.

The consultants who survived long careers were never the ones who could acquire organizational knowledge fastest. They were the ones who could sit with a CFO who’d just gotten contradictory data from three departments and help her figure out what to actually do. Not what the data said. What to do.

AI dissolves the organizational memory problem. That capability, the thing most of those mid-level roles were actually protecting, evaporates.

What remains is the judgment layer. And we have not, as a society, trained people for that in any systematic way.

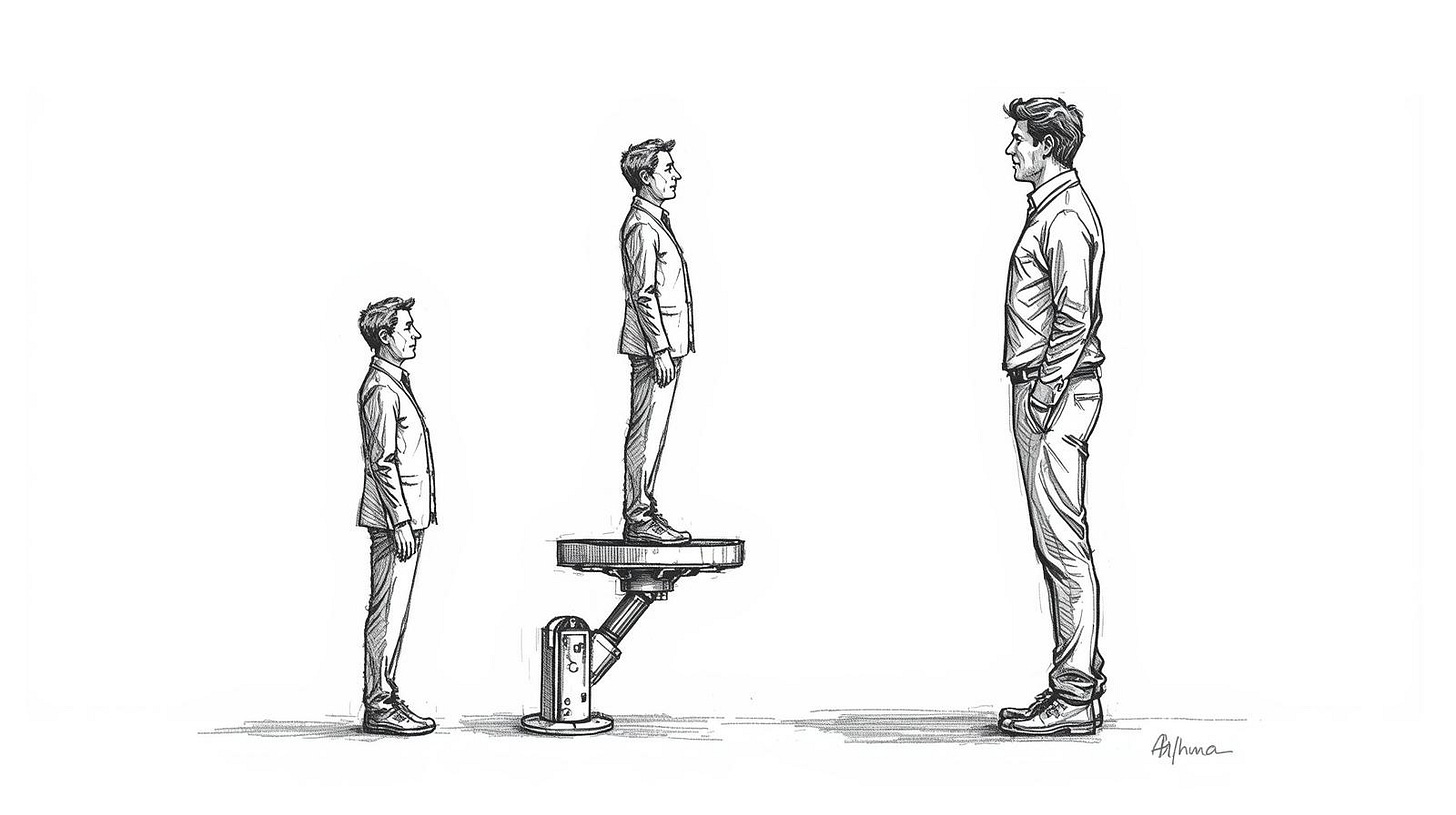

The difference between doing and deciding

A few weeks ago, I found a National Bureau of Economic Research paper written by Erik Brynjolfsson, Danielle Li, and Lindsey Raymond that I’ve thought about more than almost anything else in this space. They studied the deployment of an AI assistant in a large software customer service operation and found that the workers who improved most were not the most experienced.

They were mid-experience workers who could now perform like experts because the AI was handling the retrieval and pattern-matching work. The experienced workers improved less, percentage-wise, because they already knew the patterns.

The finding I keep returning to is a follow-on implication: if AI lifts average performance toward expert performance in retrieval-heavy tasks, what distinguishes the expert now?

Not faster retrieval. Not more accurate pattern matching. Not a better memory for the organizational manual.

What distinguishes the expert is knowing when the manual is wrong.

That sounds simple. It is not simple. Knowing when a rule should be broken, when a client relationship is about to go somewhere no process was designed for, when a decision looks right on the numbers but feels wrong for reasons that require articulating, when you should escalate versus absorb a risk yourself, when to trust a good outcome that arrived via a bad process.

This is not knowledge. It’s judgment. And judgment comes from having been wrong in consequential ways enough times to have a calibrated sense of where you’re likely to be wrong again.

I’m not fully confident that judgment can be taught or that organizations will reward it properly even when they need it. When I was working in an agency, I’ve seen companies say they want people who “challenge the status quo” and then freeze out the first person who actually does. The gap between organizational rhetoric about judgment and organizational tolerance for the actual exercise of judgment is large. I don’t know how to close that gap. I’m not sure this piece closes it.

What I’m confident about is that it’s the right problem. The roles that will hold value are not the roles where humans beat AI at retrieval or speed. They’re the roles where the human is accountable for a call, where the output requires owning a position, where being wrong has a name and a face attached to it.

Responsibility is becoming the scarce resource. That’s new. Or at least it’s happening faster than it ever has before.

Teams built for specialization will break

Most organizations are still structured as if information is expensive to move.

The departmental model, marketing over here, analytics over there, product somewhere else, legal on a different floor or a different continent, exists because historically you couldn’t give everyone access to everything. So you hired specialists. Put them in groups. Built handoffs between the groups. The handoffs created friction. The friction created coordination roles. The coordination roles created more friction. You end up with an org chart that’s a map of information bottlenecks, not a description of how work actually flows.

AI removes a lot of the reason information is expensive to move.

A generalist with good tools can now perform work that would have required three specialists six years ago. Not in every domain. Not for the hardest problems. But for a meaningful percentage of the problems organizations actually face on a given week.

The structural response to this is not complicated in concept: smaller teams, flatter reporting, roles organized around outcomes rather than functions. You stop asking “what’s your job title” and start asking “what problem are you solving.”

In practice it’s very disruptive. Entire departments whose value proposition was managing the complexity of their own existence become hard to justify. Middle management layers that existed to translate between specialists and executives get squeezed from both sides. The executive can now consume a synthesized briefing from AI tools that would have taken three analysts two days to produce. The specialist can now self-deploy in ways that used to require a manager’s bandwidth.

At this moment, I’m uncertain how fast this actually happens at scale. Organizations resist structural change with more force than they resist almost any other kind of change. The people who benefit from the current structure, and there are many of them, do not make transitions easy.

Regulation may slow adoption in some sectors. Some industries, healthcare, financial services, legal, are subject to oversight frameworks that add friction to every deployment of automated tooling.

The 2-to-4 year horizon is probably right for the early-adopter companies, the ones already organized for speed. For large traditional enterprises? Maybe 5 years, maybe a decade, maybe longer. Who knows. Structural change at a Fortune 500 is not a product sprint.

But the direction is clear. And people who have built their careers entirely inside a specialization, who’ve never been asked to own an outcome from beginning to end, who’ve always been insulated by role definition from having to make a call that wasn’t covered in the job description, a responsibility that wasn’t in their job description, are in a really tough spot.

Not because AI is taking their job. Because the organizational logic that required that job in the first place is being dismantled.

This is not going to feel like progress

I want to end here without the standard move, which is to pivot to “but here’s how you adapt.” I’ll say a few things that are actually useful. But not before saying something honest.

This is going to be hard for a lot of people. Not in a temporary disruption before everything gets better way. In a the path you were on is gone and the new one requires something you haven’t been asked to develop way.

Maybe there won't be a “jobs apocalypse” due to AI, but there will be job chaos, as Gartner predicted.

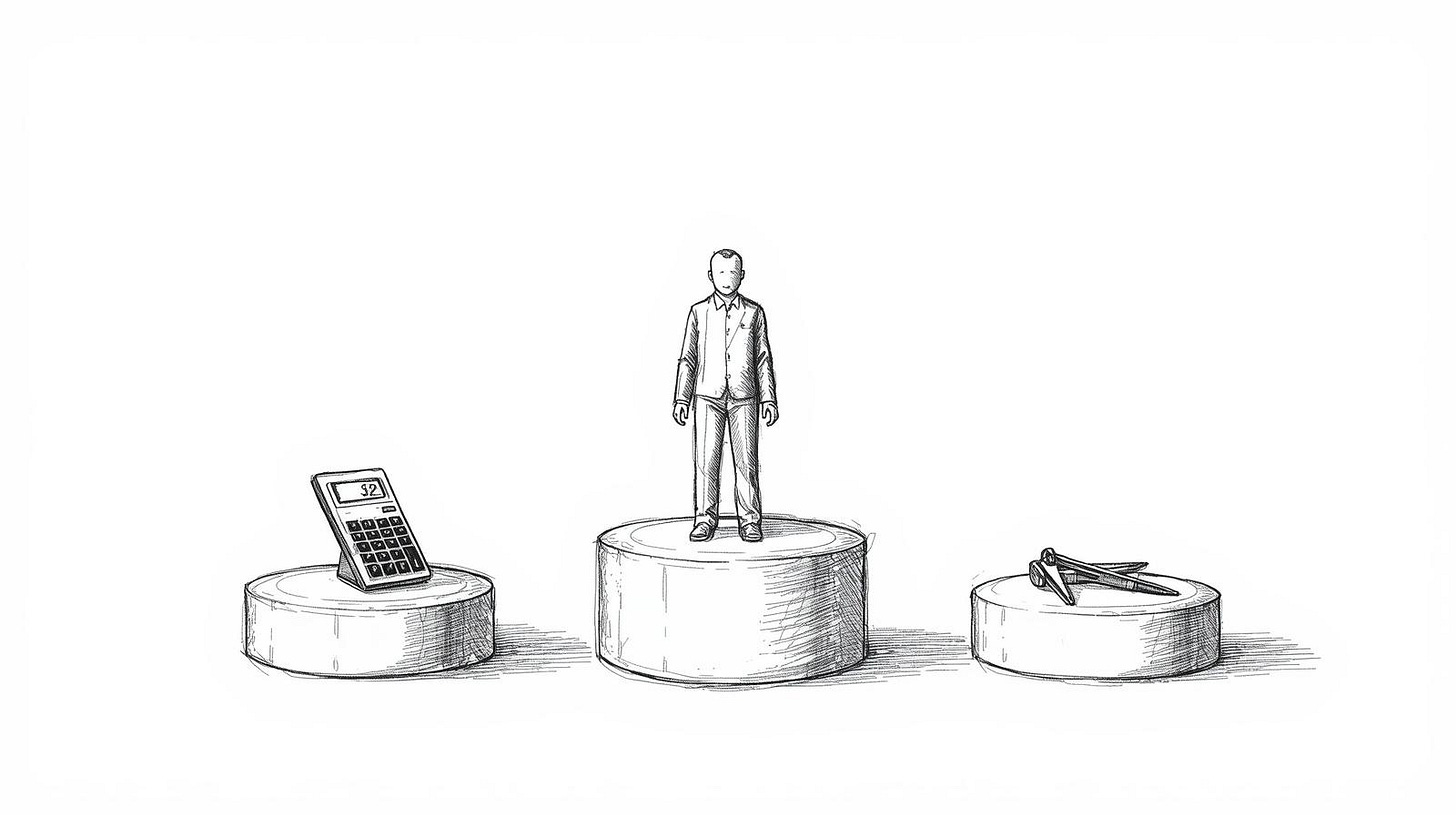

But workers most at risk are not the people who think they’re most at risk. The junior analyst who’s worried about AI taking their entry-level job is right to be concerned about the market.

But the mid-level manager who assumes their judgment is safe because they’ve been “doing strategy” for ten years should be equally worried.

Especially if the strategy work mostly involved collecting inputs from reports, formatting them into a deck, and presenting a consensus recommendation. That’s not strategy. That’s a well-dressed coordination function.

The people who will come through this in a strong position share a few traits, and none of them are credentials. They can hold ambiguity without freezing. They take positions even when the data is imperfect. They’ve been wrong in ways that were costly and they know it and they adjusted because of it, not despite it. They’re accountable for outcomes in a way that means someone calls them when something fails.

Junior roles are almost certainly shrinking in the near term, the entry-level slowdown among workers in their early twenties is showing up in hiring data pretty clearly. But whether those roles disappear or just mutate into something nobody has named yet, I genuinely don’t know.

The historical record says new role categories emerge. What those categories require and whether the people being frozen out now can access them later is a different and harder question. I keep reading optimistic takes about it and I keep not being fully convinced either way.

Recent McKinsey Global Surveys on AI adoption have highlighted that most large organizations still feel their workforce is not fully prepared for AI-augmented work roles, with many continuing to focus on initial experimentation and training rather than deep integration.

Which is a polite way of saying that eighty-five percent of companies know they have a problem and most of them are still doing workshops about it.

The honest advice is not complicated to say. Be the person who makes the call. Get into positions where you’re accountable for outcomes, not just contributory to them. Learn to be wrong in front of people and recover from it fast, because that’s what building judgment actually looks like.

But I should acknowledge that not everyone has access to those positions.

Not everyone has been given the runway to develop judgment because the orgs they’ve been in didn’t trust junior people with anything real.

And not everyone has the safety net to take career risks. The people who’ll thrive under this shift are disproportionately the people who already had structural advantages.

The shift is real. The direction is clear. But I keep coming back to something that the frameworks and the forecasts don’t really address: the people most exposed to this transition are often the ones who did exactly what they were told. They built expertise inside systems that rewarded specialization. They were reliable. They showed up.

The fact that the system is now changing its mind about what it values is not a personal failure on their part. It’s a structural one.

I don't have a satisfying answer for those people. What I'd offer instead: the discomfort of not knowing where you stand is more honest than the confidence of someone who thinks they do. Start there. It's not nothing.

The three-question test for whether your role survives the next five years

Most of the “is your job AI-proof” frameworks I’ve seen are reassurance dressed up as analysis. They ask things like “do you work with people?” or “do you use creativity?” and then confirm that yes, you’re probably fine. That’s not useful. Plenty of roles involving people and creativity are going to be restructured significantly.

Here’s a more useful frame. Three questions. Answer them about your actual day last Thursday, not about your job description.